The Spanish authorities this week introduced a significant overhaul to a program wherein police depend on an algorithm to establish potential repeat victims of home violence, after officers confronted questions in regards to the system’s effectiveness.

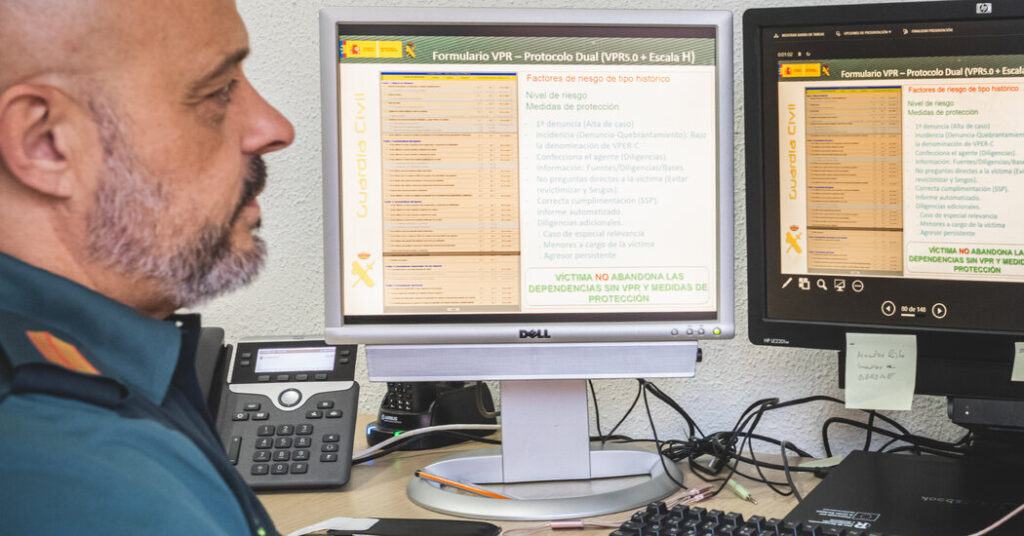

This system, VioGén, requires cops to ask a sufferer a collection of questions. Solutions are entered right into a software program program that produces a rating — from no danger to excessive danger — meant to flag the ladies who’re most susceptible to repeat abuse. The rating helps decide what police safety and different companies a girl can obtain.

A New York Times investigation final 12 months discovered that the police have been extremely reliant on the know-how, nearly all the time accepting the selections made by the VioGén software program. Some ladies whom the algorithm labeled at no danger or low danger for extra hurt later skilled additional abuse, together with dozens who have been murdered, The Occasions discovered.

Spanish officers mentioned the adjustments introduced this week have been a part of a long-planned replace to the system, which was launched in 2007. They mentioned the software program had helped police departments with restricted sources shield susceptible ladies and scale back the variety of repeat assaults.

Within the up to date system, VioGén 2, the software program will not be capable of label ladies as dealing with no danger. Police should additionally enter extra details about a sufferer, which officers mentioned would result in extra correct predictions.

Different adjustments are meant to enhance collaboration amongst authorities businesses concerned in circumstances of violence towards ladies, together with making it simpler to share data. In some circumstances, victims will obtain personalised safety plans.

“Machismo is knocking at our doorways and doing so with a violence not like something we’ve seen in a very long time,” Ana Redondo, the minister of equality, mentioned at a information convention on Wednesday. “It’s not the time to take a step again. It’s time to take a leap ahead.”

Spain’s use of an algorithm to information the therapy of gender violence is a far-reaching instance of how governments are turning to algorithms to make necessary societal selections, a pattern that’s anticipated to develop with the usage of synthetic intelligence. The system has been studied as a possible mannequin for governments elsewhere which are attempting to fight violence towards ladies.

VioGén was created with the idea that an algorithm primarily based on a mathematical mannequin can function an unbiased device to assist police discover and shield ladies who might in any other case be missed. The yes-or-no questions embrace: Was a weapon used? Have been there financial issues? Has the aggressor proven controlling behaviors?

Victims labeled as larger danger obtained extra safety, together with common patrols by their dwelling, entry to a shelter and police monitoring of their abuser’s actions. These with decrease scores obtained much less help.

As of November, Spain had greater than 100,000 energetic circumstances of ladies who had been evaluated by VioGén, with about 85 % of the victims labeled as dealing with little danger of being damage by their abuser once more. Cops in Spain are educated to overrule VioGén’s suggestions if proof warrants doing so, however The Occasions discovered that the chance scores have been accepted about 95 % of the time.

Victoria Rosell, a decide in Spain and a former authorities delegate targeted on gender violence points, mentioned a interval of “self-criticism” was wanted for the federal government to enhance VioGén. She mentioned the system might be extra correct it if pulled data from extra authorities databases, together with well being care and schooling methods.

Natalia Morlas, president of Somos Más, a victims’ rights group, mentioned she welcomed the adjustments, which she hoped would result in higher danger assessments by the police.

“Calibrating the sufferer’s danger nicely is so necessary that it will probably save lives,” Ms. Morlas mentioned. She added that it was crucial to keep up shut human oversight of the system as a result of a sufferer “must be handled by folks, not by machines.”